Our AI & HPC Infrastructure

Enterprise AI & HPC Infrastructure

Build Your AI Factory with HPC Power

End-to-end infrastructure solutions for building, deploying, and scaling AI workloads across modern data centers.

Scalable Infrastructure for Training Advanced AI Models

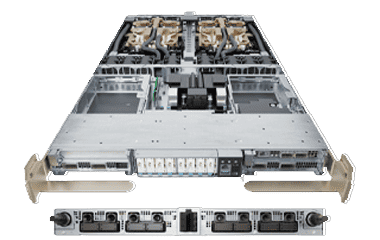

AI Training Platforms

AI Training Platforms for Large-Scale Model Development

AI training platforms are purpose-built infrastructure environments designed to develop and train machine learning and deep learning models using large datasets and high-performance compute resources.

These platforms combine GPU-accelerated servers, high-throughput storage systems, and low-latency networking to enable efficient parallel processing. Training workloads require continuous data movement between storage and compute nodes, making balanced system design critical for performance. movement between storage and compute nodes, making balanced system design critical for performance.

As AI models increase in size and complexity, training environments must scale horizontally, allowing multiple compute nodes to operate together as a unified system while maintaining synchronization and performance consistency.

Architecture Role

AI training platforms act as the core processing environment for model development, forming a tightly integrated system where compute, storage, and networking operate as a single optimized layer.

Within this architecture:

- GPU compute nodes execute parallel model training tasks

- High-speed storage systems continuously supply training data

- Low-latency fabric enables fast communication between nodes

These platforms are typically deployed as clusters, where performance depends on maintaining balance between compute power, data throughput, and network efficiency. Any bottleneck in one layer directly impacts overall training performance.

Key Features

- GPU-accelerated compute environments

- High-performance storage integration

- Low-latency networking fabric

- Scalable cluster architecture

- Optimized for parallel processing

Applications Include :

WHY UNITEK ?

- Access to GPU-ready infrastructure platforms

- Scalable cluster configurations

- Alignment with modern AI architectures

Deploy AI Models at Scale with Low Latency

AI Inference Platforms

AI Inference Platforms for Real-Time Intelligence

AI inference platforms are designed to run trained AI models in production environments, enabling real-time or near real-time decision-making across applications and services.

Unlike training environments, inference platforms are optimized for efficiency, latency, and scalability rather than raw compute power. They must handle large volumes of requests while maintaining consistent response times.

These systems can be deployed in centralized data centers or distributed environments such as edge locations, depending on application requirements.

Architecture Role

AI inference platforms function as the execution layer of AI systems, where trained models are applied to live data streams.

Within this architecture:

- Compute nodes process incoming data using trained models

- Storage systems provide model access and historical data

- Networking ensures integration with applications and data sources

These platforms are often horizontally scalable and can be distributed across multiple locations to ensure low-latency performance and high availability.

Key Features

- Low-latency processing

- Efficient compute utilization

- Scalable deployment models

- Optimized for production workloads

Applications Include :

WHY UNITEK ?

- Optimized inference-ready platforms

- Flexible deployment options

- Scalable infrastructure support

Massive Compute Power for Data-Intensive Workloads

GPU Clusters

GPU Clusters for High-Performance Parallel Computing

GPU clusters are distributed computing environments consisting of multiple GPU-enabled servers interconnected through high-speed networking. These clusters enable large-scale parallel processing required for compute-intensive workloads.

By distributing workloads across multiple nodes, GPU clusters significantly reduce processing time and enable handling of large datasets and complex computations that would not be feasible on a single system.

Architecture Role

GPU clusters act as the central compute engine for parallel workloads, enabling distributed processing across multiple nodes.

Within this architecture:

- Each node contributes GPU and CPU resources

- High-speed fabric enables fast data exchange between nodes

- Shared or distributed storage provides consistent data access

Cluster performance depends heavily on network efficiency and synchronization between nodes, making balanced infrastructure design essential.

Key Features

- Multi-node GPU environments

- High-speed interconnects

- Scalable cluster architecture

- Optimized for parallel workloads

Applications Include :

WHY UNITEK ?

- Cluster-ready infrastructure platforms

- High-performance configurations

- Support for scalable deployments

Advanced Computing for Scientific and Industrial Workloads

HPC Clusters

High-Performance Computing (HPC) Clusters

HPC clusters are designed to solve complex computational problems by distributing workloads across multiple interconnected compute nodes.

These systems are widely used in scientific research, engineering simulations, financial modeling, and large-scale data analysis, where performance and computational accuracy are critical.

Architecture Role

HPC clusters provide the distributed compute framework for large-scale simulations and analytical workloads, enabling multiple systems to operate as a unified processing environment.

Within this architecture:

- Compute nodes execute parallel workloads

- Networking fabric enables synchronization and communication

- Storage systems provide shared data access

They are optimized for high-throughput processing and efficient workload distribution across nodes.

Key Features

- Distributed compute architecture

- High-performance interconnects

- Scalable cluster design

- Optimized for compute-intensive workloads

Applications Include :

WHY UNITEK ?

- HPC-ready infrastructure platforms

- Scalable cluster solutions

- Optimized performance

Modular AI Infrastructure at Scale

AI Factory –Pod Systems

AI Factory and Pod-Based Infrastructure

AI factory or pod-based systems are modular infrastructure architectures that integrate compute, storage, and networking into scalable building blocks.

These systems are designed for organizations that require large-scale AI capabilities, allowing them to deploy standardized infrastructure units that can be replicated and expanded as demand grows.

They represent the evolution from isolated AI environments to fully integrated, production-scale AI infrastructure.

Architecture Role

AI factory systems represent the complete infrastructure layer, integrating all components required for AI workloads into a unified architecture.

Within this architecture:

- Compute provides processing power

- Storage delivers high-throughput data access

- Networking enables seamless communication between components

These systems are designed for scalability, allowing organizations to expand infrastructure by adding additional pods while maintaining consistent performance and architecture.

Key Features

- Modular infrastructure design

- Scalable deployment

- Integrated compute, storage, and networking

- High-performance architecture

Applications Include :

WHY UNITEK ?

- End-to-end infrastructure platforms

- Scalable AI solutions

- Alignment with modern AI architecture trends