Blog

Power and Cooling Challenges in AI Data Centers

Addressing Power and Thermal Challenges

Artificial intelligence is rapidly transforming the design and operation of modern data centers. While much of the attention around AI infrastructure focuses on GPUs and high-performance computing systems, one of the most significant challenges lies in power delivery and cooling infrastructure.

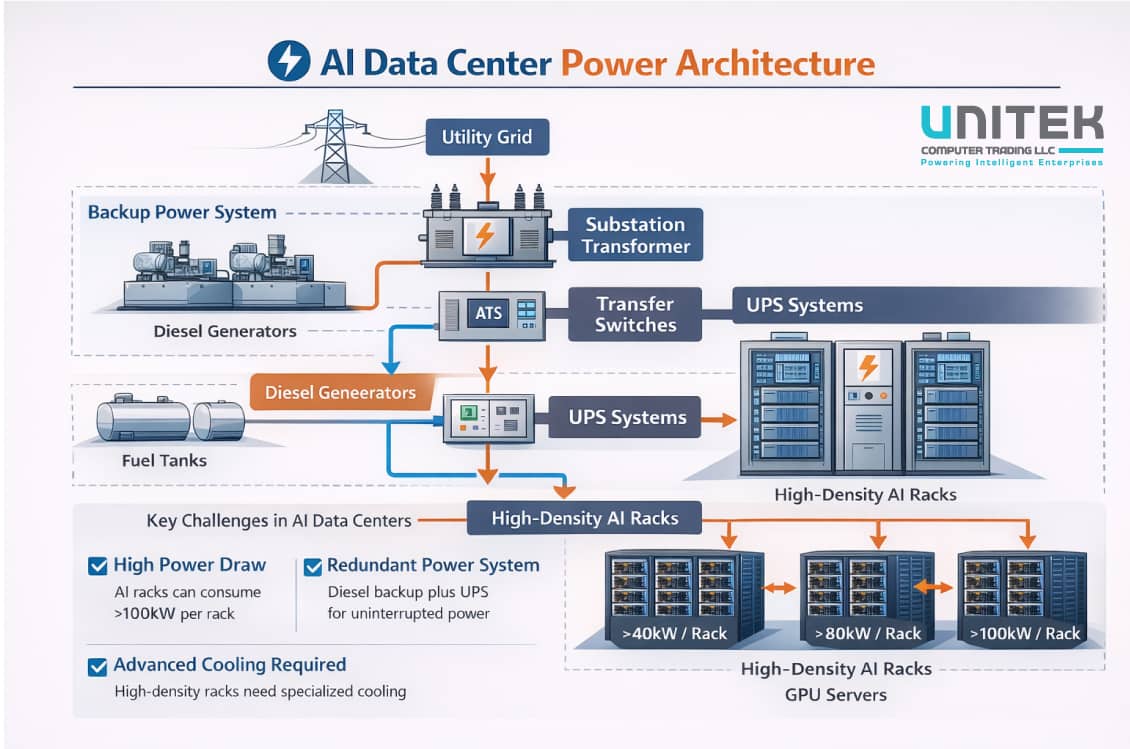

Traditional enterprise data centers were designed for workloads such as virtualization, enterprise applications, and databases. These environments typically operate with rack power densities between 5kW and 15kW. In contrast, modern AI infrastructure especially GPU clusters used for training large models can exceed 40kW, 80kW, or even 100kW per rack.

This dramatic increase in power density introduces new engineering challenges in energy distribution, thermal management, facility design, and operational efficiency. Organizations deploying AI infrastructure must rethink how power and cooling systems are designed in order to support high-density compute environments.

This article explores why AI workloads require significantly more power, how cooling technologies are evolving to meet these demands, and what enterprises must consider when building AI-ready data centers.

Why AI Workloads Consume So Much Power ?

AI workloads differ significantly from traditional enterprise applications in terms of computational intensity.

Machine learning models require massive numbers of mathematical operations, particularly matrix multiplications and tensor computations. These operations are highly parallel and ideally suited to GPU architectures.

Unlike CPUs, which are optimized for sequential processing and general-purpose workloads, GPUs are designed to execute thousands of operations simultaneously. This parallelism enables dramatic improvements in performance but also increases energy consumption.

Several factors contribute to the high power demands of AI infrastructure:

Because of these characteristics, AI infrastructure requires far more electrical capacity than traditional data center environments.

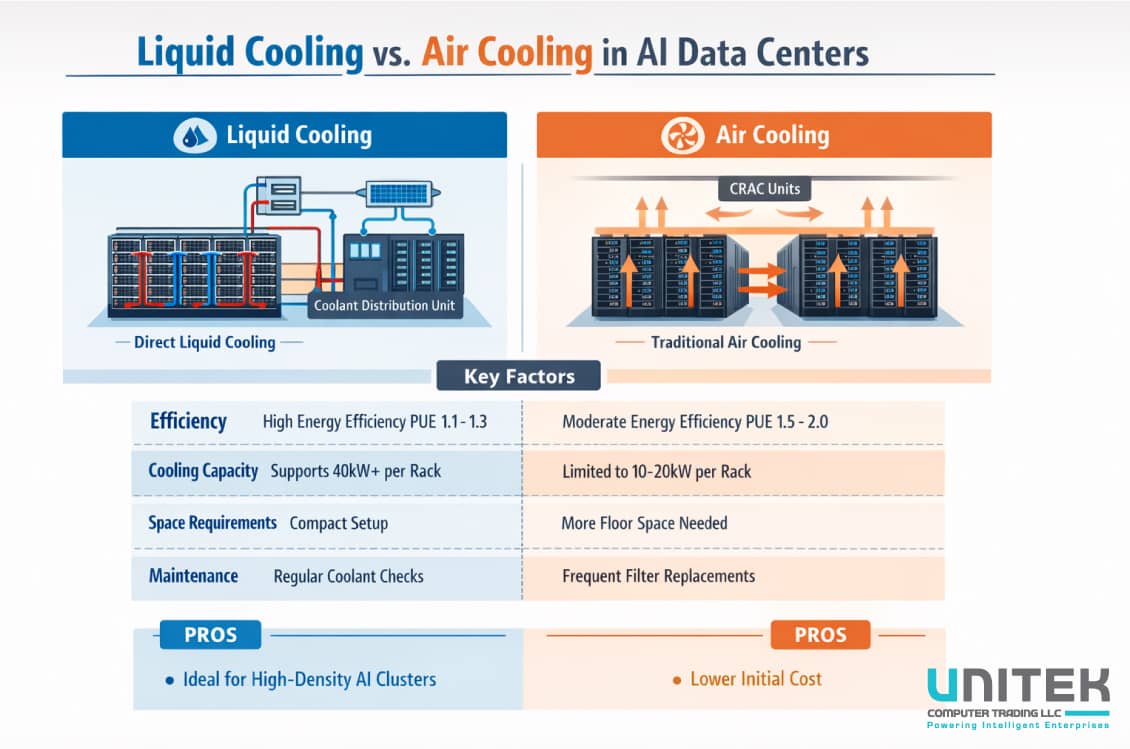

The Limits of Traditional Data Center Cooling

Traditional data centers rely primarily on air cooling systems to manage heat generated by servers and networking equipment.

In these environments, cold air is delivered through raised floors or cooling units and circulated through server racks. Hot air generated by the equipment is then removed through return vents or containment systems.

While air cooling works effectively for lower-density racks, it becomes increasingly inefficient as power densities rise.

When rack densities exceed approximately 30kW, air cooling begins to reach practical limitations. Moving sufficient volumes of air to dissipate heat becomes extremely difficult and energy-intensive.

Hot spots may develop within racks, and maintaining stable temperatures becomes more challenging.

As AI workloads push rack densities beyond traditional limits, data center operators are turning to more advanced cooling solutions.

Liquid Cooling for AI Infrastructure

One of the most promising solutions for managing high-density AI workloads is liquid cooling.

Liquids are significantly more effective at transferring heat than air, allowing data centers to dissipate large amounts of thermal energy more efficiently.

Several liquid cooling technologies are now being deployed in AI data centers.

Direct-to-Chip Cooling

Direct-to-chip cooling uses liquid-cooled plates attached directly to processors or GPUs. These plates absorb heat from the components and transfer it to a circulating liquid coolant.

This method allows heat to be removed much more efficiently than traditional air cooling.

Direct-to-chip cooling is becoming increasingly common in high-performance computing environments and AI training clusters.

Immersion Cooling

Immersion cooling takes a different approach by submerging servers in specialized dielectric fluids. These fluids absorb heat directly from the components without damaging electronic systems.

Immersion cooling systems can handle extremely high power densities and offer excellent thermal efficiency.

Although this technology is still evolving, it is gaining attention as a potential solution for future AI data centers.

Power Distribution Challenges

Cooling is only one part of the equation. AI infrastructure also places significant demands on electrical distribution systems.

Traditional enterprise data centers were designed to deliver moderate power levels across large numbers of racks. AI clusters, however, concentrate large amounts of power in relatively small areas.

This shift introduces several challenges.

Power delivery capacity

Facilities must be able to deliver sufficient electrical power to support high-density compute racks.

Redundant power architecture

Mission-critical AI environments require redundancy to ensure uninterrupted operation.

Power efficiency

Higher energy consumption can significantly increase operational costs if not carefully managed.

To address these challenges, many modern AI data centers incorporate advanced power distribution systems and high-efficiency power supplies.

Energy Efficiency and Sustainability

The rapid growth of AI infrastructure has also raised concerns about energy consumption and environmental sustainability.

Large AI training clusters can consume enormous amounts of electricity, prompting organizations to seek more energy-efficient designs.

Energy efficiency strategies include:

- Optimizing cooling technologies

- Improving power supply efficiency

- Deploying energy-efficient processors and accelerators

- Integrating renewable energy sources

Improving the Power Usage Effectiveness (PUE) of data centers has become a key objective for organizations deploying large-scale AI infrastructure.

Designing AI-Ready Data Centers

Organizations planning to deploy AI infrastructure must carefully evaluate their facility capabilities.

Important considerations include:

Power capacity

Facilities must support significantly higher rack power densities.

Cooling architecture

Advanced cooling systems may be required to manage thermal loads.

Scalable infrastructure

AI clusters may grow rapidly, requiring flexible facility design.

Networking and layout

Infrastructure must support high-bandwidth networking fabrics connecting GPU clusters.

Companies designing new AI facilities are increasingly adopting architectures optimized specifically for accelerated computing environments.

Infrastructure providers such as Giga Computing are developing GPU-dense server platforms designed to integrate with modern AI data center designs.

The Future of AI Data Center Design

As AI adoption continues to grow, the next generation of data centers will look very different from traditional enterprise environments.

Future AI data centers will likely feature:

- Ultra-high-density GPU racks

- Advanced liquid cooling systems

- High-capacity power distribution networks

- Optimized networking fabrics for distributed computing

These facilities will function more like industrial-scale computing factories than conventional enterprise data centers.

The organizations that successfully adapt their infrastructure strategies will be better positioned to support the growing demands of artificial intelligence workloads.

Key Takeaways

Artificial intelligence is dramatically increasing the power and cooling requirements of modern data centers.

Traditional infrastructure designed for CPU-based workloads is no longer sufficient to support high-density AI computing environments.

Organizations deploying AI platforms must consider:

- High-density power delivery systems

- Advanced cooling technologies such as liquid cooling

- Efficient thermal management strategies

- Scalable facility designs capable of supporting GPU clusters

As AI continues to reshape the technology landscape, power and cooling infrastructure will become one of the most critical components of next-generation data centers.

Recent Comments